Vision Transformer (ViT) Implementation for Spoofing Detection

Jan 2, 2022

·

1 min read

demo

demo🔬 Project Overview

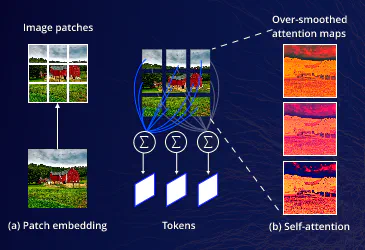

The primary purpose of this implementation is to create a robust image classification system that can accurately detect spoofing attempts in digital media. Spoofing detection is a critical security application where the model learns to distinguish between authentic images and manipulated or falsified ones. The code utilizes a state-of-the-art Vision Transformer architecture, which has demonstrated superior performance in complex visual recognition tasks compared to traditional convolutional networks.

⚙️ Technical Details

For optimal results when implementing this code:

- Dataset Organization: Ensure your dataset is organized with “train” and “test” directories, each containing subdirectories for each class (e.g., “real” and “spoof”)

- Hardware Requirements: Vision Transformers are computationally intensive. While the code will run on CPU, GPU acceleration is strongly recommended for practical training times

- Hyperparameter Tuning: The provided values (batch size, learning rate, etc.) are reasonable starting points, but optimal values may depend on your specific dataset and hardware

- Model Size Considerations: The ViT-Large model has approximately 307M parameters. If computational resources are limited, consider using “vit_base_patch16_224” (86M parameters) instead

- Early Stopping: For production environments, consider implementing early stopping based on validation metrics to prevent overfitting and reduce training time

(For full source code, pin configurations, and implementation details, please view the GitHub repository using the button above).