About Me

With a Ph.D. in AI Convergence and an M.S. in Electrical Communication Systems, I build intelligent systems that connect the physical world with machine learning. I bring a unique, dual-focused expertise to my work:

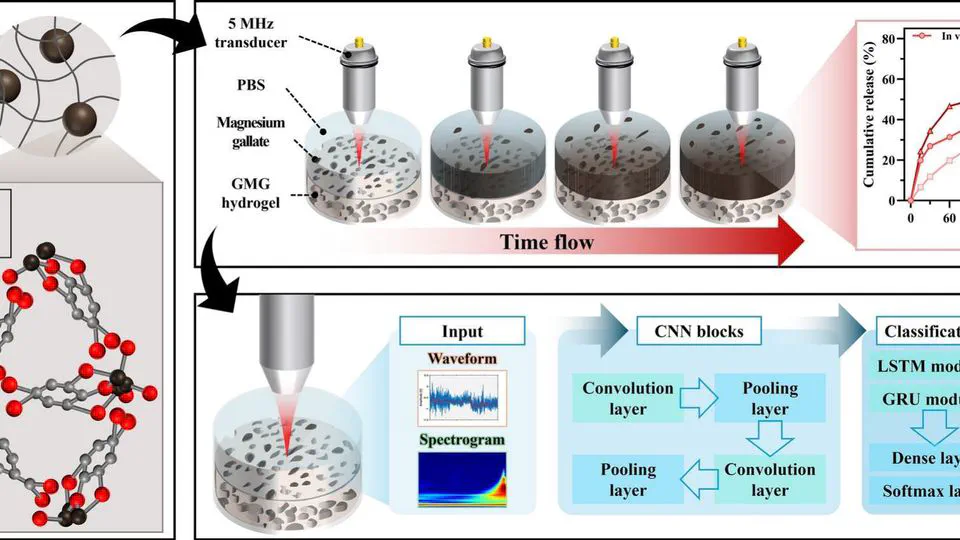

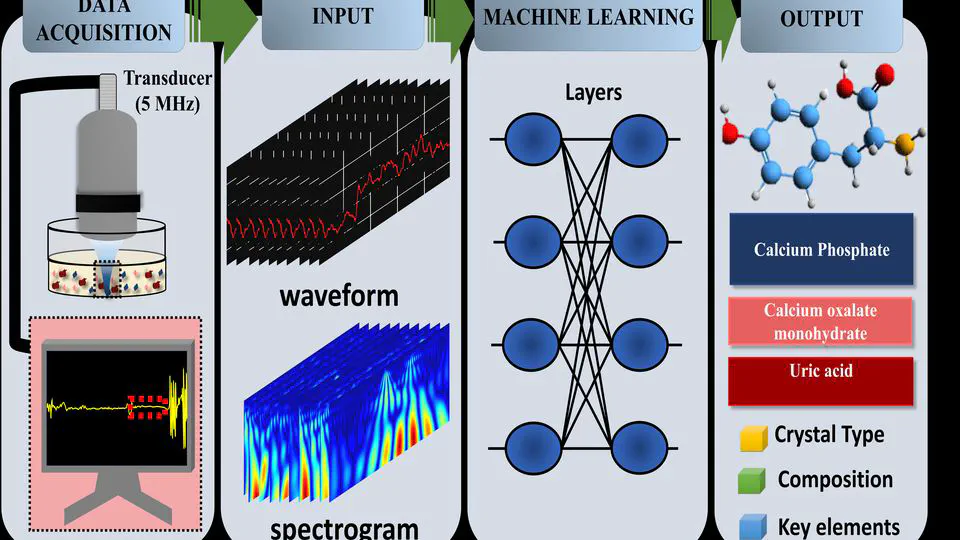

- AI & Data Science: Designing end-to-end ML pipelines for medical image processing, geometric analysis, engineer ML pipelines capable of extracting robust features from high-dimensional, low-SNR temporal data, and ultrasound signal classification.

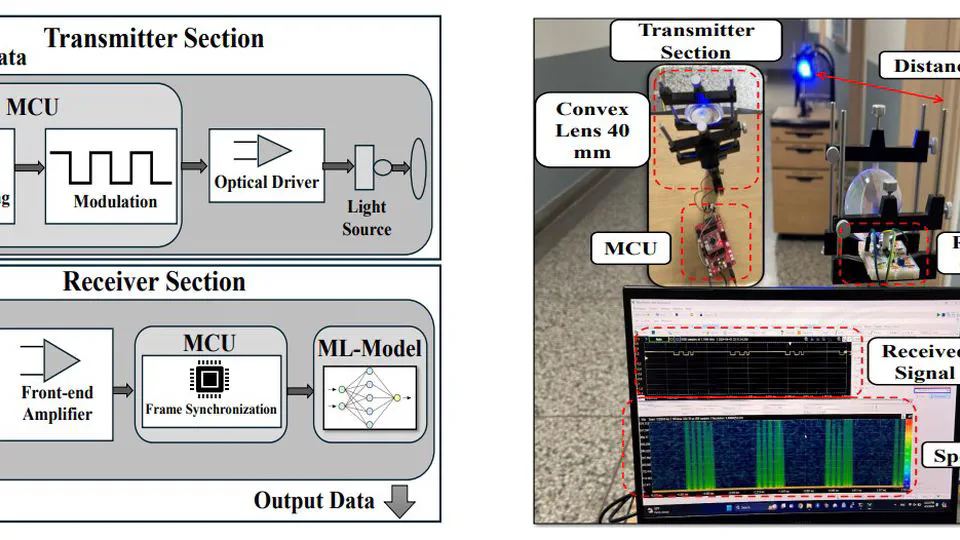

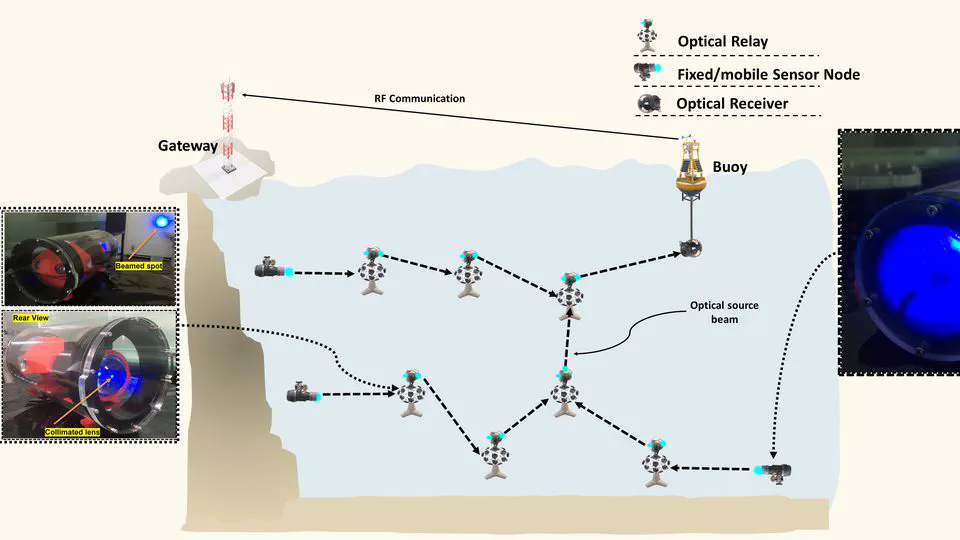

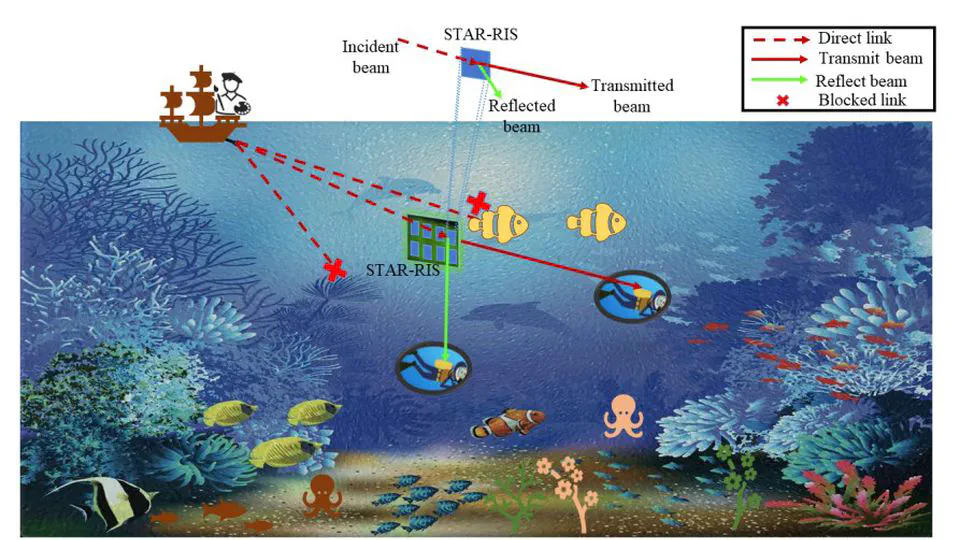

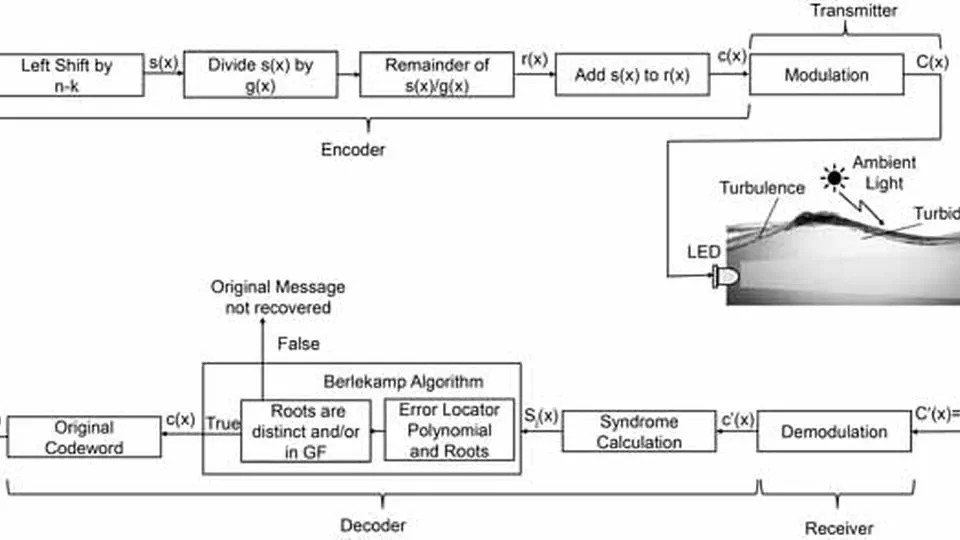

- Hardware & Communications: Prototyping next-generation IoT/UIoT modules (VLC, LoRa, UWOC) and architecting AI-assisted networks, grounded by a strong foundation in RF/microwave design and fabrication.

- AI assisted IoT

- Applied AI for Biomedical Applications

- Visible Light Communication/UWOC

- Passive RF Components

PhD Artificial Intelligence Convergence

Pukyong National University, Korea

M.S. Electrical and Electronic Engineering

Soonchunhyang University, Korea

B.S Telecommunication Engineering

University of Engineering and Technology, Pakistan

Applied AI & Medical Image Processing Designing end-to-end ML and computer vision pipelines (CVAT, SwinIR, Mask/Keypoint R-CNN, SAM, UNet etc.) for the annotation, segmentation, classification, and visualization of X-ray, CT, MRI, and other medical images.

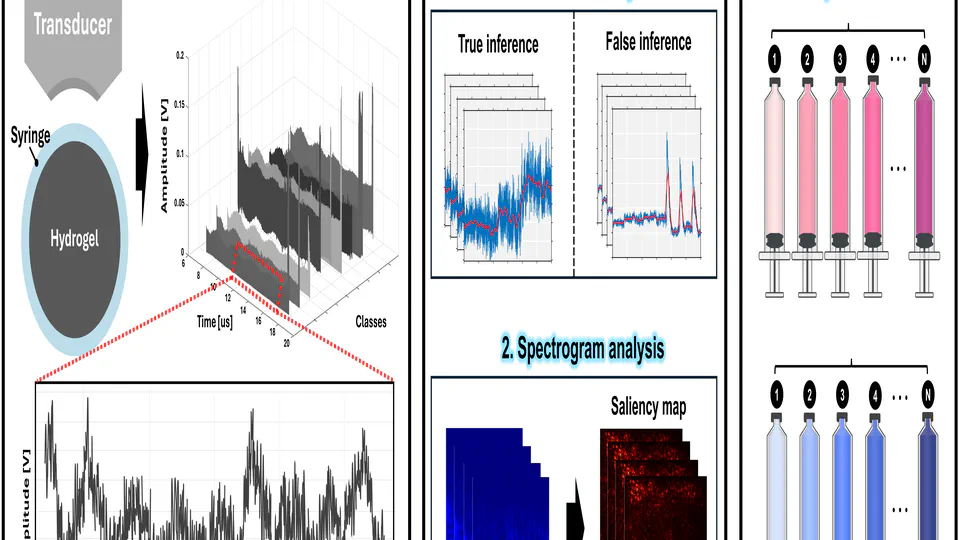

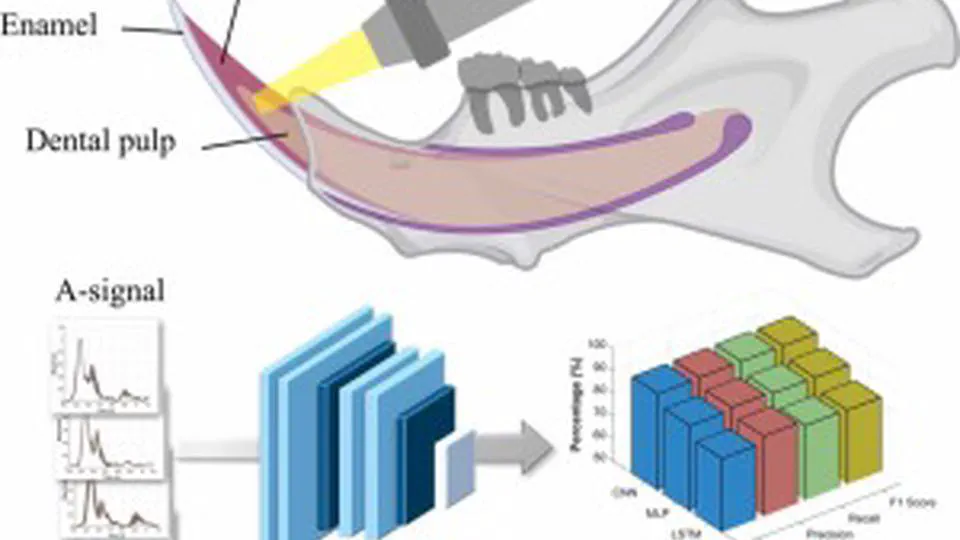

Signal Analysis & TinyML Leveraging deep learning (CNN, and Transformer based architectures or Hybrid ) to analyze noisy, long-sequenced signals, extracting robust patterns from complex waveforms, frequency spectrums, and low-SNR ultrasound data. Deploying energy-efficient TinyML models for edge inference on resource-constrained hardware platforms, including Raspberry Pi, STM32, Arduino, and similar microcontrollers.

AI-Assisted UIoT & Optical Comm. Architecting UWOC systems, multi-hop UWSNs, relay-based diversity gain, and ML-based predictive monitoring via IMU telemetry.

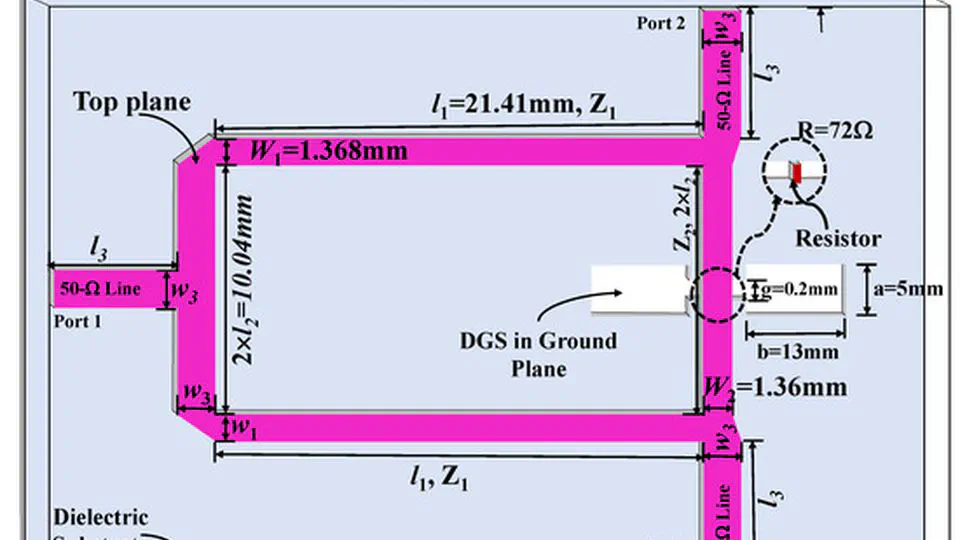

RF/Microwave Engineering Designing, simulating, and optimizing radio/microwave passive components, including EM simulation and DGS circuit development.

- Development of ultrasound-based driver alcohol detection system for safety (UDADSS). J. H. Park, M. Salman, and H. G. Lim. Submitted, 2025.

- Hybrid VLC/RF System for IoT with Augmented Embedded ML-based Link Switching., IEEE IoT Journal. Submitted, 2025.

- Identifying Risk Pathways to Chronic Homelessness: A Machine Learning Approaches with Implications for Social Work and Public Health Policy., Analyses of Social Issues and Public Policy. Submitted, 2026.

Advanced Generative AI for Multi-Modal Brain Tumor Imaging

The primary goal of this research is to overcome the critical bottleneck of data scarcity in neuro-oncology by developing a robust, high-fidelity Generative AI framework. This framework leverages state-of-the-art GANs, Latent Diffusion Models (LDMs) and Transformer-based architectures to synthesize realistic, multi-modal (MRI/CT) brain tumor images. The ultimate objective is to demonstrate that augmenting training data with these synthetic images significantly and reproducibly enhances the diagnostic accuracy, robustness, and generalizability of downstream AI models for tumor segmentation (Swin-UNETR) and classification (ViT/ResNet), paving the way for more reliable clinical decision support tools.

Tech Stack: Generative AI (Pix2Pix, CycleGAN), Latent Diffusion Models (LDM), Vision Transformers (ViT), Swin-UNETR, ResNet, PyTorch

ML-aided Medical Image Analysis (Mask/Keypoint R-CNN & SAM)

I design and implement an end-to-end analysis pipeline for red blood cell imaging using machine learning. I use CVAT to annotate images with bounding boxes, pixel-perfect segmentation masks, and keypoints. I incorporate SwinIR to enhance image quality and fine-tune a Mask R-CNN (and Keypoint R-CNN when needed) on the custom dataset. I also leverage the SAM tool (Segment Anything Mask) for robust segmentation tasks. Additionally, I develop scripts to calculate geometric dimensions and compute statistical percentage changes between “before” and “after” images.

Tech Stack: PyTorch, OpenCV, CVAT, SwinIR, SAM, Data Visualization